Why AIO Readiness Is the New Competitive Battleground

The way people find information online has fundamentally changed. Not gradually. Not in some distant future that marketing teams can plan for next quarter. Right now, in 2026, nearly half of all Google searches trigger an AI Overview that answers the user's question before they ever reach a website. ChatGPT processes billions of queries monthly. Perplexity handles 780 million. And the trajectories are all pointing in the same direction.

Traditional search engine optimization prepared organizations for a world where humans scanned a list of ten blue links and chose which to click. That world is being replaced by one where AI systems read, interpret, synthesize, and present information on the user's behalf. The question is no longer whether your website ranks on page one. The question is whether AI can find your content, understand it, trust it, and cite it when a potential customer asks a question you should be answering.

This shift has a name: Generative Engine Optimization, Answer Engine Optimization, or AI Overview Optimization. The terminology is still settling, but the underlying reality is not. Organizations that fail to optimize for AI-driven discovery are building their digital presence on a foundation that is actively eroding beneath them.

The Numbers Behind the Shift

The data on changing user behavior is not subtle. According to Gartner, traditional search engine volume is projected to drop 25% by the end of 2026, with AI chatbots and virtual agents absorbing that share. Bain & Company reports that 80% of consumers now rely on zero-click results for at least 40% of their searches, reducing organic web traffic by an estimated 15 to 25%.

The zero-click phenomenon predates AI Overviews, but AI has accelerated it dramatically. Before Google began surfacing AI-generated summaries, roughly 56% of searches ended without a click; after the AI Overview rollout, that figure jumped to 69%. On mobile devices (where the majority of searches now occur), 77% of queries end without the user visiting another website. When an AI Overview is specifically present, the zero-click rate reaches 83%.

For websites that do still earn clicks, the landscape has shifted. Seer Interactive's September 2025 study found organic click-through rates plummeted 61% for queries where AI Overviews appeared, dropping from 1.76% to 0.61%. Ahrefs confirmed this trajectory in February 2026, reporting a 58% lower average click-through rate for top-ranking pages when an AI Overview is present.

These are not projections or speculative forecasts. They describe what is happening today to every website that relies on organic search traffic.

The AI Search Shift by the Numbers

projected drop in traditional search volume by end of 2026

zero-click rate when AI Overviews are present

conversion rate from AI search traffic vs 2.8% from Google organic

more qualified visitors from AI platforms than traditional search

visibility improvement from GEO optimization techniques

projected GEO services market by 2031, up from $886M in 2024

The Industry-by-Industry Impact

AI Overviews have not rolled out uniformly across sectors, and the industries most affected are often the ones where digital presence is most critical to growth.

Health and wellness queries now trigger AI Overviews 43% of the time, making this one of the most impacted verticals. For health systems, pharmaceutical companies, and wellness brands, informational content that previously drove patient acquisition and product discovery is now being synthesized into AI-generated summaries. When a prospective patient asks an AI engine about treatment options or a wellness protocol, the cited sources need to demonstrate E-E-A-T signals through credentialed authorship and clinical specificity. Organizations without Person schema on their provider profiles and without FAQ content addressing common patient questions are effectively invisible in these conversations.

Financial services faces a similar challenge. Queries about investment products, insurance comparisons, and regulatory compliance increasingly produce AI Overview responses. The stakes are particularly high here because AI systems apply extra scrutiny to financial content under Google's YMYL (Your Money or Your Life) guidelines. Financial institutions that lack Organization schema with verifiable credentials, author attribution on advisory content, and citation-rich thought leadership are losing ground to competitors who have these signals in place.

Public sector organizations occupy a unique position. Government websites and public-facing agencies are frequently cited as authoritative sources by AI systems, but only when their content is technically accessible. Many government sites still rely on legacy CMS platforms with limited schema support and inconsistent structured data. The opportunity is significant: public sector entities that modernize their schema deployment and content structure can become the default cited source for policy, regulatory, and civic information queries.

Associations and nonprofits depend heavily on thought leadership and member-facing content to demonstrate value. When a prospective member or donor asks an AI system about an industry trend or professional development topic, the association that gets cited is the one with substantive, attributed, schema-marked content. Many associations publish valuable research and position papers but fail to structure that content for AI discoverability, burying it behind generic page templates without FAQPage schema or author attribution.

Manufacturing and industrial companies are seeing AI Overviews appear more frequently for technical specification queries, material comparisons, and process questions. B2B buyers increasingly use AI tools to research vendors and compare capabilities before ever visiting a supplier's website. Manufacturers with deep technical content, ProfessionalService schema for each capability, and FAQ sections addressing common procurement questions position themselves to be cited in these high-intent queries.

Travel and hospitality queries have seen some of the steepest AI Overview growth, jumping from 10% to 78% coverage within a single year. Destination marketing organizations, hotel brands, and hospitality companies face a reality where "best hotels in" or "things to do in" queries are answered entirely within the AI summary. Without Organization schema, rich location-based structured data, and FAQ content addressing traveler questions, these businesses forfeit visibility at the exact moment a potential customer is making a decision.

The Rise of AI Answer Engines

Google's AI Overviews are only part of the picture. A parallel ecosystem of AI-native search platforms has emerged, and it is growing at a pace that should capture every marketer's attention.

ChatGPT reached 2.8 billion monthly active users by early 2026. Its share of total internet traffic doubled between January and April 2025 alone. Perplexity AI, which positions itself explicitly as an AI-first search engine, processed 780 million queries in May 2025 — a 239% increase from August 2024. Google Gemini has captured 18.2% of the AI chatbot market, up from 5.4% just twelve months prior.

The referral traffic these platforms generate tells an even more compelling story. AI-powered search engines now account for an estimated 12 to 18% of total referral traffic, up from 5 to 8% in late 2024. That growth rate sits at 130 to 150% year-over-year as of Q1 2026. Critically, this traffic converts differently: AI search traffic converts at 14.2% compared to Google's 2.8%. Visitors arriving from AI platforms are 4.4 times more qualified than those from traditional search.

Early adopters of generative engine optimization report that 32% of their sales-qualified leads now originate from AI search. One Fortune 500 automotive client documented a 300% increase in showroom inquiries and a 500% boost in sales conversion after implementing GEO strategies. The traffic volume from AI search may still be smaller than traditional organic, but its economic value per visit is dramatically higher.

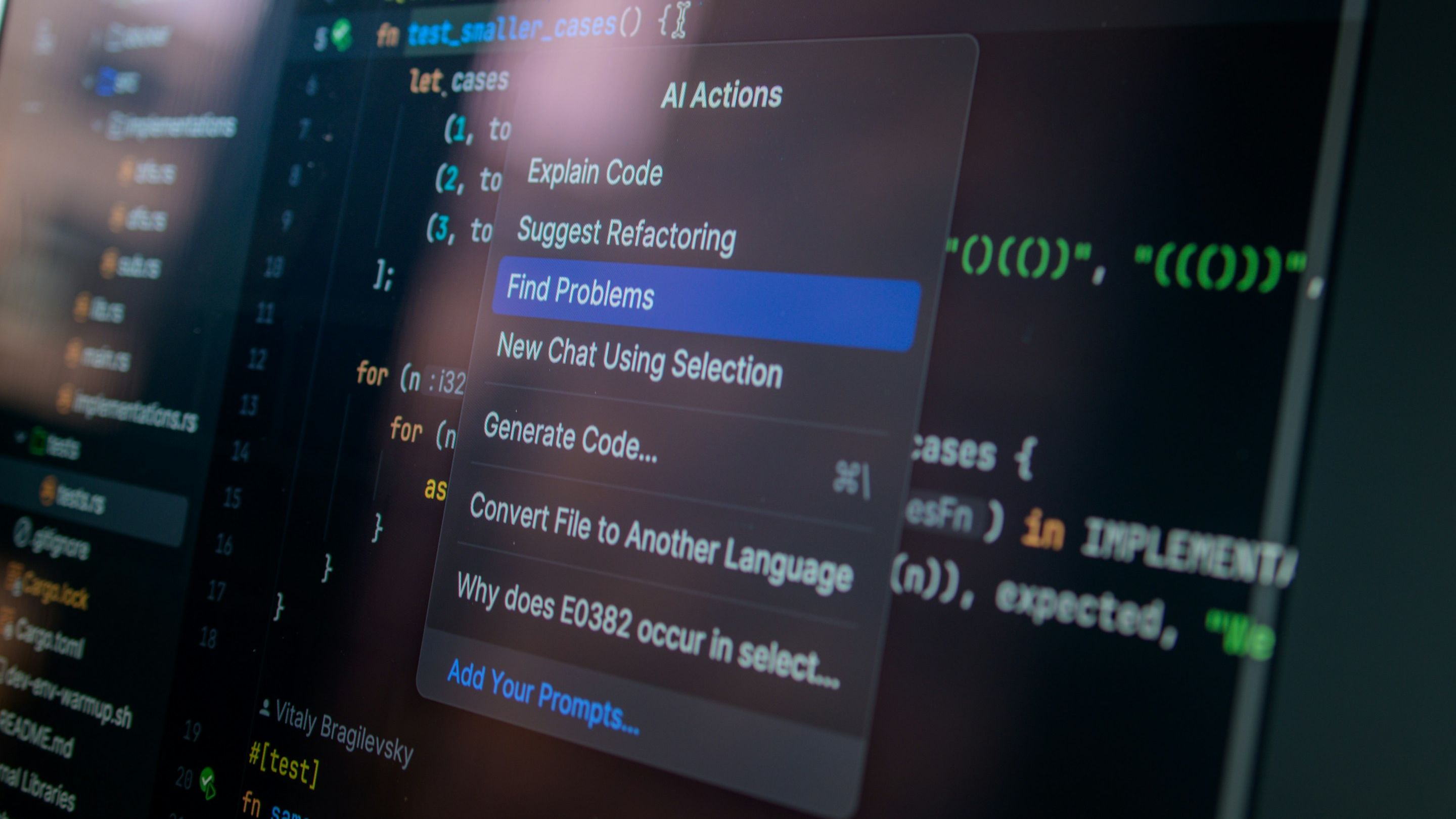

How AI Crawlers See Your Website (And What They Miss)

Understanding why AI search matters is the first step. Understanding what AI systems require from your website to cite it is where most organizations fall short. The gap between what organizations assume AI can see and what it actually can see is often wider than anyone on the marketing team realizes.

AI crawlers operate fundamentally differently from the Googlebot that SEO teams have spent two decades optimizing for. A landmark study by Vercel, analyzing over half a billion bot fetches, confirmed that none of the major AI crawlers render JavaScript: not OpenAI's GPTBot, not Anthropic's ClaudeBot, and not PerplexityBot. These crawlers download JavaScript files roughly 11.5% of the time, but they never execute them. They see only the raw HTML your server delivers on the initial request.

This technical reality has profound implications. Organizations running client-side rendered single-page applications — where content is assembled in the browser via JavaScript — are effectively invisible to most AI crawlers. The content exists for human visitors but not for the systems that are increasingly deciding which brands get mentioned in AI-generated answers.

Server-side rendering becomes the baseline requirement, not the optimization. Rendering content is only the beginning, though. AI systems need to understand what that content means, who created it, why it should be trusted, and how it relates to the entities and concepts a user is asking about. This is where structured data, content architecture, and authority signals converge into what we evaluate through a 12-point AIO readiness framework.

The 12-Point AIO Audit: A Framework for AI Visibility

Assessing true AI readiness requires examining a website across twelve distinct criteria. Each criterion addresses a specific dimension of how AI systems discover, interpret, and ultimately decide whether to cite a source. Some are technical. Some are content-driven. All of them matter.

Schema and SSR Rendering

This is the foundation everything else builds on. AI crawlers need to see your content in the raw HTML response, not after JavaScript execution. Server-side rendering ensures every crawler (from Googlebot to GPTBot to ClaudeBot) receives the same complete content on the first request.

However, SSR alone is insufficient. Structured data markup, implemented as JSON-LD (which Google and Microsoft have both publicly endorsed for their generative AI features), tells AI systems what your content represents in machine-readable terms. An analysis of 73 websites found that those with properly implemented structured data were cited in AI responses 3.2 times more often than those without it.

Minimum viable schema in 2026 includes WebSite, WebPage, Organization, ProfessionalService, FAQPage, Article, and Person types. Most websites deploy only two or three of these. The gap between what SSR can deliver and what organizations actually configure represents the single lowest-effort, highest-impact opportunity in AIO readiness.

E-E-A-T Signals

Google's Experience, Expertise, Authoritativeness, and Trustworthiness framework has evolved from a content quality guideline into a critical AI visibility filter. Research shows a 0.81 correlation between verified E-E-A-T signals and AI Overview citation, with 96% of cited content coming from sources that demonstrate these qualities.

For AI systems, E-E-A-T manifests through specific, verifiable signals: author attribution with Person schema, bylines linked to professional profiles with documented credentials, editorial policies, and trust indicators like certifications, awards, and industry partnerships. Anonymous content or content attributed to a generic "editorial team" performs measurably worse in AI citation than content tied to named experts with verifiable backgrounds.

Content Depth and Substance

AI systems prefer substantive, detailed content because it provides more material to extract, synthesize, and cite. Thin pages — those with fewer than 300 words of meaningful content — rarely earn AI citations regardless of how well they rank in traditional search.

Service pages averaging 430 words, for instance, give AI models significantly less to work with than competitor pages averaging 800 or more. The depth question is not about word count for its own sake but about whether the content provides enough specific, attributable information for an AI system to confidently reference it when constructing an answer.

Freshness and Recency

AI systems heavily weight content freshness when determining citation eligibility. The dateModified property in WebPage schema, visible publication dates, and copyright year signals all feed into recency calculations. A site with a homepage last modified in December 2025 and a contact page updated in February 2026 sends mixed signals about how current its information is.

Content without visible dates presents a different problem: when an AI system cannot determine when content was created or last updated, it has less confidence in citing it, particularly for topics where accuracy depends on timeliness.

Structural Clarity

Single H1 tags, logical H2/H3 nesting, and semantic HTML elements like <main>, <nav>, <section>, and <article> help AI systems parse content hierarchy and extract information with confidence. A page that jumps from H1 to H3 (skipping H2) or splits a heading across multiple HTML elements introduces ambiguity that human readers might not notice but that AI parsers struggle with.

Structural clarity also extends to how listing and archival pages are organized. Generic <div> wrappers around content cards are less semantically meaningful than <article> elements, which explicitly signal that each card represents a distinct piece of content.

FAQ and Q&A Content

This criterion is where many organizations have the most room to improve. Zero FAQ sections across an entire website means zero question-formatted content for AI systems to extract — and question-answer pairs map directly to how users query AI engines.

Pages with FAQPage schema markup are 3.2 times more likely to appear in Google AI Overviews for a straightforward reason: when a user asks an AI system a question, the system looks for content that is already structured as a direct answer. FAQ content, particularly when paired with FAQPage schema, provides exactly that structure. Five to eight well-crafted Q&A pairs on key service or product pages can transform a site's AI citation eligibility overnight.

Brand and Entity Definition

AI systems build internal knowledge graphs of the entities they encounter. An Organization schema with sameAs links to LinkedIn, YouTube, Facebook, and other platforms helps AI systems connect a brand's web presence into a single, coherent entity. Without this schema, AI systems rely on text parsing to perform entity recognition, a far less reliable process.

Consistent branding reinforces entity definition. When a company's name, tagline, and value proposition appear consistently across the site, AI systems build stronger entity associations. Inconsistencies — a different company name format on different pages, conflicting claims about capabilities — erode that confidence.

Technical AIO Infrastructure

Evaluating this criterion means assessing the technical backbone that makes everything else possible: fast load times on modern hosting infrastructure, canonical URLs on every page, viewport meta tags for mobile rendering, Open Graph and Twitter Card meta tags that provide AI systems with structured summary data, and complete meta tag coverage across all pages.

A Next.js application on Vercel with full SSR, for example, provides an exceptional technical foundation: every AI crawler sees identical, complete content, there is no JavaScript dependency for core content delivery, and the infrastructure is already doing the hardest part of AIO readiness. What often lags behind is the configuration layer: the schema types, the content formatting, and the entity signals that transform a fast, accessible site into one that AI systems actively want to cite.

Citation and Source Attribution

Content that demonstrates its claims through citations and data attribution gets prioritized by AI systems. Research into AI Overview citation patterns reveals that 67% of cited content includes direct expert quotes, 78% features numerical data with source attribution, and 85% comes from domains with established topical authority.

Adding statistics with cited sources, referencing industry research by name, and linking to authoritative external resources all increase an organization's citation-worthiness. It mirrors how academic publishing works: content that cites its sources is treated as more credible than content that makes claims without evidence.

Multi-Modal Content

AI systems are increasingly capable of processing images, video, and structured visual data alongside text. Descriptive alt text on images serves dual purposes: accessibility compliance and AI content comprehension. A site with 15% alt text coverage (where 85% of images have empty or generic alt attributes) is leaving significant contextual information inaccessible to AI crawlers.

Video content, data visualizations, and interactive elements provide additional signals about content depth and relevance. These multi-modal signals will only grow in importance as AI systems become more sophisticated in processing non-text content.

Internal Linking and Content Architecture

The way pages link to each other creates a map that AI systems use to understand content relationships and site authority structure. Strong internal linking from the homepage (38 or more links) combined with deep interlinking across service pages, case studies, and articles helps AI systems understand which content is most important and how topics relate.

Content architecture also influences crawl depth. AI crawlers allocate finite resources to each domain. A clear hierarchy with logical linking ensures that high-value content is discoverable within one or two clicks of the homepage, maximizing the likelihood that AI systems index the pages that matter most.

Competitive AIO Positioning

AIO readiness never exists in a vacuum; an organization's AI visibility is always relative to its competitors. If a competitor deploys five or more schema types while a site only has three, the competitor's content provides AI systems with richer structured data to work with. If competitors have FAQ sections with FAQPage schema on their service pages while another site has none, the competitor's content is more directly citable for question-based queries.

Competitive AIO auditing examines not just what a site is doing, but what it is not doing that others in the same space already are. This relative positioning often reveals where the highest-impact improvements lie — and where the window for first-mover advantage is closing.

The 12-Point AIO Audit at a Glance

Understanding the Five Major AI Crawlers

Understanding which AI systems are crawling the web and what each is looking for matters because the crawler landscape directly informs what optimization priorities matter most.

Googlebot remains the most capable crawler, rendering JavaScript and accessing full page content including dynamically loaded elements. It sees everything, but that does not mean Google's AI Overview cites everything it sees. The AI Overview layer applies its own citation logic on top of what Googlebot indexes, favoring content with strong schema, clear structure, and authoritative signals.

GPTBot, operated by OpenAI for ChatGPT, does not render JavaScript and reads raw HTML, headings, meta tags, and any schema delivered server-side. Missing Organization or Service schema means ChatGPT's entity recognition relies entirely on text parsing, a less precise method that reduces the likelihood of accurate brand mentions.

Specifically, ClaudeBot from Anthropic does not execute JavaScript, reads SSR-delivered HTML, and relies on semantic structure and internal linking for context. Zero FAQ content and zero citations reduce the probability that Claude will surface a site's content when users ask relevant questions.

PerplexityBot accesses raw HTML and schema, with BreadcrumbList providing navigational hierarchy. However, without question-answer formatted content, the probability of earning a direct answer citation from Perplexity drops to near zero.

Bingbot, which powers Microsoft Copilot, does render JavaScript and has access to the full schema stack plus OG and Twitter Card metadata. The dateModified timestamp in WebPage schema informs freshness calculations. Sparse schema — even with full content access — limits how effectively Copilot can integrate a site's information into its knowledge graph.

Each crawler has different capabilities, but they share a common dependency: structured, accessible, well-attributed content delivered in the initial HTML response. This is the foundation the 12-point audit framework is designed to evaluate.

The Academic Foundation: What the Research Shows

Generative engine optimization has moved beyond speculation into peer-reviewed research. In 2024, researchers from Princeton, Georgia Tech, the Allen Institute for AI, and IIT Delhi published the foundational GEO paper at KDD 2024 (the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining). Their work introduced GEO-bench, a large-scale benchmark of 10,000 diverse queries, and tested nine distinct content optimization methods.

These findings challenged several assumptions that SEO professionals had carried into the AI era. Traditional keyword optimization tactics performed poorly in generative contexts, while the strategies that demonstrated the strongest results were adding statistics with citations, including quotations from relevant sources, and establishing authoritativeness through demonstrable expertise. GEO techniques improved visibility by up to 40% in generative engine responses.

Critically, the research showed that optimization effectiveness varies significantly across domains. A strategy that works well for technology content may perform differently for healthcare or financial services. This domain-specific variability underscores why a structured audit approach (one that evaluates each optimization dimension independently) produces better outcomes than applying generic best practices across the board.

Many optimization tactics being discussed under the GEO banner are updated best practices that have always mattered: structured, high-quality content, clear answers, brand authority, and technical excellence. The difference is the stakes.

The Convergence of SEO and GEO

A common misconception positions GEO as a replacement for SEO, but the data tells a different story. Analysis shows that 99% of URLs cited in AI Overviews also rank in the top 10 of Google's organic search results. Being cited by AI and ranking well in traditional search are not competing objectives but complementary layers of the same visibility strategy.

Lily Ray, one of the industry's most cited voices on AI search, has observed that many optimization tactics being discussed under the GEO banner are updated best practices that have always mattered: structured, high-quality content, clear answers, brand authority, and technical excellence. The difference is the stakes. In a traditional search environment, mediocre SEO meant lower rankings. In an AI-driven environment, the gap between being cited and being invisible is binary.

Blog content remains the number one page type cited in AI Overviews, according to Conductor's 2026 analysis. This aligns with what the 12-point framework evaluates: content depth, question-formatted structure, source attribution, and author expertise combine to make blog and long-form content the highest-performing format for AI citation.

For organizations, the key takeaway is not to abandon SEO in favor of GEO but to recognize that the bar for what "good SEO" means has risen dramatically. The technical signals, content quality markers, and authority indicators that AI systems use to determine citation-worthiness are a superset of what traditional search algorithms have always valued. An organization that optimizes for AI readiness will, by definition, improve its traditional search performance. The reverse is not necessarily true.

The Gap Between Capability and Configuration

One pattern emerges consistently across AIO audits of enterprise websites: the technical infrastructure is often ahead of the content and configuration layer. Organizations running modern tech stacks (Next.js on Vercel, headless CMS platforms, cloud-native architectures) frequently have the SSR capability to deliver content to every AI crawler. What they lack is the schema breadth, the content depth, the FAQ structure, and the entity signals that turn accessible content into citable content.

This gap is actually encouraging news because the path to AIO readiness is often configuration and content work, not architectural overhaul. Deploying Organization schema with sameAs links, adding ProfessionalService schema for each service offering, creating FAQ sections with FAQPage markup on high-traffic pages, and building author attribution across existing content are all achievable within 30 to 90 days for most organizations with modern technical foundations.

The competitive implications of this gap are significant. Because most organizations have not yet closed it, those that do gain a meaningful first-mover advantage in AI visibility. By early 2026, most enterprise marketing teams have a GEO initiative in some form, but most small and mid-market organizations have not started. McKinsey's 2025 CMO survey found that only 16% of brands systematically track AI search performance. The opportunity is clear, and it will not remain open indefinitely.

Measuring What Matters

Traditional SEO metrics — keyword rankings, organic sessions, click-through rates — remain relevant but insufficient for measuring AI visibility. Organizations need to add new measurement dimensions.

AI citation tracking monitors when and where a brand is referenced in AI-generated answers across platforms like ChatGPT, Perplexity, Google AI Overviews, and Copilot. Schema coverage auditing evaluates what percentage of a site's pages carry complete structured data versus the minimum. First-party log analysis (what Lily Ray recommends over synthetic rank tracking tools) reveals actual crawl behavior from AI bots — how often GPTBot, ClaudeBot, and PerplexityBot visit, which pages they request, and what content they can access.

Content citation rate measures the percentage of high-value pages that appear in AI-generated responses for relevant queries. Competitive citation gap analysis compares an organization's AI visibility against specific competitors. And conversion attribution from AI referral traffic quantifies the economic impact of AI-driven visits compared to other channels.

These metrics are not optional additions to existing dashboards. They represent the measurement framework for a channel that converts at 14.2% (versus 2.8% for traditional Google search) and generates visitors that are 4.4 times more qualified. Ignoring AI referral measurement in 2026 is equivalent to ignoring mobile analytics in 2014 — technically possible, strategically indefensible.

A 90-Day Path to AIO Readiness

For organizations assessing their own AI readiness, the 12-point framework maps naturally to a phased implementation approach.

The first 30 days should target the highest-impact, lowest-effort improvements. Deploying Organization schema with sameAs links is a single SSR component addition on most modern platforms. Adding ProfessionalService schema for each service offering follows the same pattern. De-duplicating title tags, remediating alt text across images, and creating FAQ sections with FAQPage schema on three to four high-traffic pages — these are content and configuration tasks that do not require architectural changes.

Days 31 through 60 should focus on content depth and authority signals. Building author attribution across all existing content by connecting articles and case studies to named team members with Person schema, bios, credentials, and linked profiles. Expanding thin service pages from 300-word overviews to 800-word substantive descriptions. Adding citations and data attribution throughout existing content.

The final phase, days 61 through 90, addresses competitive positioning and measurement. Implementing AI citation tracking across ChatGPT, Perplexity, Google AI Overviews, and Copilot. Setting up first-party log analysis to monitor AI crawler behavior. Creating a competitive citation gap analysis framework. And building the reporting infrastructure to quantify AI referral traffic against traditional channels.

This phased approach allows organizations to capture quick wins while building toward comprehensive AIO readiness. It also produces measurable results at each stage — which matters when securing ongoing investment in a discipline that many executive teams are still learning about.

What Comes Next

The market signals point in one direction. Generative AI is projected to power 50% of queries worldwide by 2028, according to McKinsey. Gartner projects that 25% of traditional searches will disappear by the end of this year. AI referral traffic could represent 20 to 28% of total web referral traffic by the close of 2026.

The GEO services market reflects this trajectory, growing from $886 million in 2024 to a projected $7.3 billion by 2031 — an eightfold increase at a 34% compound annual growth rate. New professional roles are emerging: Prompt SEO specialists, Overview Optimization analysts, and AI Attribution strategists.

For organizations evaluating their digital strategy, the calculus is straightforward. The AI search channel is smaller than traditional organic but growing at 130 to 150% year-over-year. Its traffic converts at five times the rate. The optimization work required to capture it largely reinforces (rather than conflicts with) traditional SEO best practices. And the competitive window for early adoption is open but narrowing as enterprise teams stand up GEO capabilities.

The 12-point framework we have outlined here is not theoretical. Each criterion maps to specific, measurable signals that AI systems use to determine citation eligibility. Organizations that audit their current state against these twelve dimensions can identify precisely where their gaps are, prioritize the highest-impact improvements, and build a roadmap from invisible to citable.

The question is no longer whether AI will change how people discover brands and information online. It already has. The question is whether your website is ready for it.

Key Terminology

AI Overview(AIO)

AI-generated summary that appears at the top of Google search results, synthesizing information from multiple sources to answer queries directly.

Generative Engine Optimization(GEO)

The practice of optimizing content so AI systems like ChatGPT, Perplexity, and Google AI Overviews cite it when answering user questions.

Answer Engine Optimization(AEO)

A related discipline focused on structuring content to appear as direct answers in AI-powered search platforms and voice assistants.

E-E-A-T

Experience, Expertise, Authoritativeness, and Trustworthiness — Google's quality framework that AI systems use to determine citation eligibility.

Server-Side Rendering(SSR)

A technique where web pages are rendered on the server before being sent to the browser, ensuring AI crawlers that cannot execute JavaScript see complete content.

JSON-LD

JavaScript Object Notation for Linked Data — the structured data format endorsed by Google and Microsoft for communicating page meaning to search engines and AI systems.

The Real Cost of Waiting

Many organizations are tempted to treat AIO readiness as something that can wait until the "dust settles" on how AI search evolves. That temptation is understandable: the terminology is new, the measurement tools are still maturing, and the competitive pressure feels more theoretical than the quarterly traffic numbers sitting in Google Analytics.

However, the math does not support waiting. Every month that an organization's content remains invisible to AI crawlers is a month where competitors who have optimized are building citation history, earning AI-driven leads, and training AI systems to associate their brand with the topics that matter. AI models learn from patterns, and an organization that is consistently cited for a topic builds compounding authority over time. An organization that begins optimizing six months from now will need to overcome not just its own gaps but also the citation momentum its competitors have built in the interim.

Mobile optimization in 2013 and 2014 provides an instructive analogy. Organizations that waited for the mobile traffic shift to "prove itself" before investing in responsive design found themselves playing catch-up for years. The organizations that moved early captured audiences that formed lasting brand preferences. AIO readiness is following the same adoption curve, but at a faster pace.

Consider the economics. If AI search traffic converts at five times the rate of traditional organic, and if AI referral traffic is growing at 130 to 150% year-over-year, the revenue opportunity of early optimization compounds rapidly. A B2B organization that captures even a modest share of AI-driven qualified leads at a 14.2% conversion rate will see a measurable impact on pipeline within quarters, not years.

The 12-point audit framework exists precisely because this shift is both urgent and actionable. It translates a complex, evolving landscape into specific, prioritized improvements that organizations can begin implementing today. Not every criterion will be equally relevant to every organization, and not every gap will require the same level of effort to close. However, the organizations that assess where they stand (honestly, against all twelve dimensions) will be the ones that shape their AI visibility rather than being shaped by its absence.

Steve Hamilton

SVP, DXP and Custom Solutions Practice

Stay Informed

Get industry-leading insights delivered to your inbox.

Industry Leading Insights

Our latest thinking on personalization, digital transformation and experience design